NVIDIA GPUs: Powering the Intelligence Revolution

As an IT specialist who has spent years architecting high-performance computing (HPC) environments and AI infrastructures, I have witnessed the transition of the Graphics Processing Unit (GPU) from a niche component for gamers into the very engine of the modern digital economy.

NVIDIA, in particular, has shifted from being a hardware manufacturer to a foundational platform company. Below is a comprehensive, deep-dive exploration of NVIDIA GPUs, their architecture, benefits, and the transformative role they play in today’s technological landscape.

The Silicon Revolution: A Comprehensive Guide to NVIDIA GPU Architecture and Ecosystem

1. Introduction: Beyond the Frame Rate

In the early 2000s, a GPU was simply a co-processor designed to accelerate the rendering of 3D graphics by performing repetitive mathematical calculations in parallel. However, NVIDIA’s introduction of CUDA (Compute Unified Device Architecture) in 2006 changed everything. It allowed developers to use the GPU for general-purpose processing (GPGPU), turning a “graphics card” into a massively parallel computational beast.

Today, when we talk about NVIDIA GPUs like the H100, A100, or the latest Blackwell B200, we aren’t talking about pixels; we are talking about tensors, neural networks, and exascale computing.

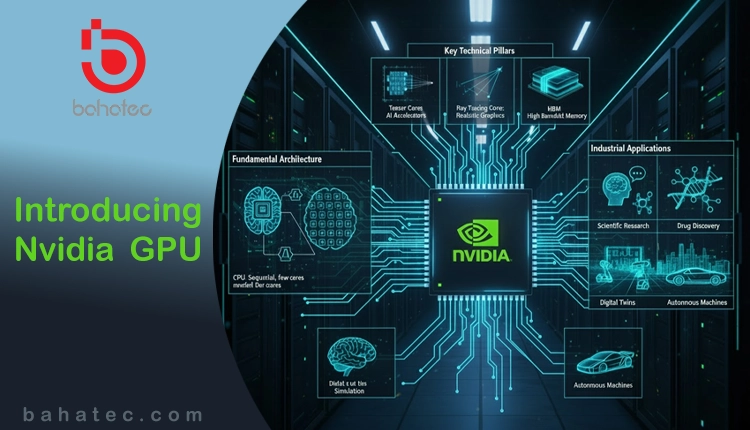

2. Fundamental Architecture: CPU vs. GPU

To understand the power of an NVIDIA GPU, one must understand the architectural difference between a CPU and a GPU.

-

CPU (Central Processing Unit): Think of the CPU as a brilliant professor. It is designed for complex logic, branching, and sequential tasks. It has a few powerful cores optimized for low-latency execution.

-

GPU (Graphics Processing Unit): Think of the GPU as an army of thousands of specialized workers. While each “worker” (core) is less powerful than a CPU core, they can perform thousands of simple mathematical tasks simultaneously.

NVIDIA GPUs utilize Streaming Multiprocessors (SMs), which contain hundreds of CUDA Cores and specialized Tensor Cores. This allows them to handle “embarrassingly parallel” workloads—tasks that can be broken down into thousands of independent pieces.

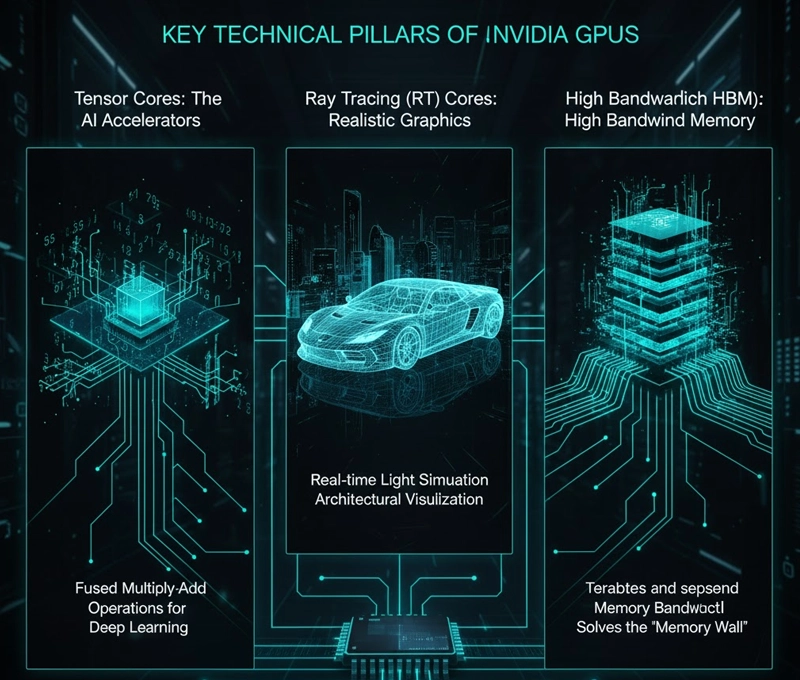

3. Key Technical Pillars of NVIDIA GPUs

-

Tensor Cores: The AI Accelerators

Introduced with the Volta architecture, Tensor Cores are specialized hardware units designed specifically for deep learning. They perform fused multiply-add operations on large matrices, which are the fundamental building blocks of neural network training and inference.

-

Ray Tracing (RT) Cores

For the creative and engineering sectors, RT Cores provide hardware acceleration for simulating the physical behavior of light. This has revolutionized real-time rendering in films, architectural visualization, and high-end gaming.

-

High Bandwidth Memory (HBM)

Modern NVIDIA GPUs use HBM3 or HBM3e, providing terabytes per second of memory bandwidth. In AI workloads, the bottleneck is often not the processing speed, but how fast data can be fed into the cores. HBM solves this “memory wall” problem.

4. The Benefits of NVIDIA GPUs in the Enterprise

As an IT specialist, I often justify GPU investments to stakeholders based on these four pillars:

-

Massive Throughput: Tasks that take weeks on a standard CPU cluster (like genomic sequencing or climate modeling) can be completed in hours on an NVIDIA DGX system.

-

Energy Efficiency: While a single H200 consumes significant power (up to 700W), it replaces dozens of CPU-only servers. On a per-calculation basis, GPUs are far more energy-efficient for AI and HPC.

-

The CUDA Ecosystem: This is NVIDIA’s “moat.” Millions of developers use CUDA. Almost every AI framework (PyTorch, TensorFlow) is optimized first for NVIDIA hardware.

-

Scalability via NVLink: Unlike standard PCIe connections, NVIDIA NVLink allows multiple GPUs to act as a single, massive processor, sharing memory and data at incredible speeds.

5. Industrial Applications: Where the Magic Happens

I. Artificial Intelligence & LLMs

The most prominent use case today is the training of Large Language Models (LLMs). Models like GPT-4 or Llama-3 require thousands of NVIDIA GPUs working in unison. The GPU handles the “backpropagation” process, where the model learns from trillions of tokens of data.

II. Scientific Research & Healthcare

NVIDIA GPUs power the world’s fastest supercomputers.

-

Drug Discovery: Simulating how a protein folds or how a drug molecule interacts with a virus.

-

Genomics: Speeding up DNA sequencing from days to minutes.

III. Professional Visualization & Digital Twins

Using NVIDIA Omniverse, companies create “Digital Twins” of entire factories. By simulating every physical variable on a GPU, engineers can predict failures or optimize workflows before a single brick is laid in the real world.

IV. Autonomous Machines

From self-driving cars to warehouse robots, NVIDIA’s Orin and Thor platforms provide the “brain” that processes sensor data (Lidar, Radar, Cameras) in real-time to make split-second decisions.

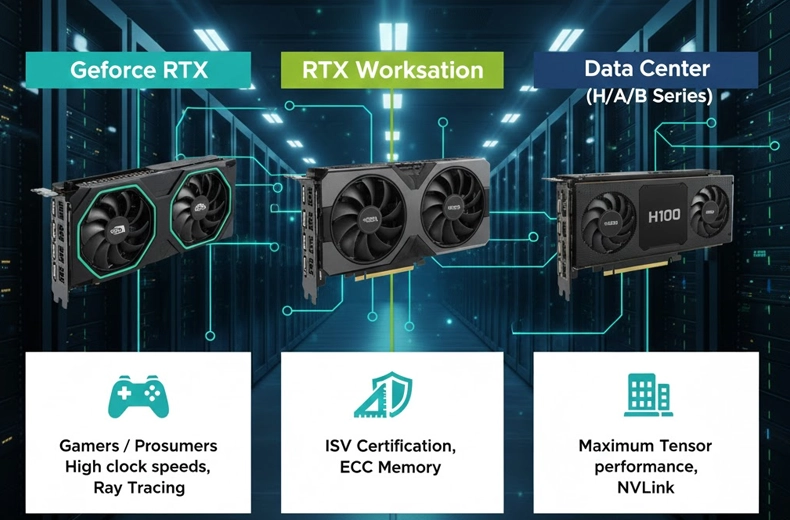

6. Comparing Modern NVIDIA Families

| Series | Target Audience | Key Feature |

| GeForce RTX | Gamers / Prosumers | High clock speeds, Ray Tracing |

| RTX Workstation | Designers / Engineers | ISV Certification, ECC Memory |

| Data Center (H/A/B Series) | Enterprises / Cloud | Maximum Tensor performance, NVLink |

7. The Specialist’s Perspective on Deployment Strategy

When deploying NVIDIA GPUs, we don’t just “plug and play.” We must consider:

-

Thermal Management: Data center GPUs generate immense heat. Liquid cooling is becoming a standard requirement for Blackwell-class deployments.

-

Interconnects: To avoid bottlenecks, we utilize Mellanox InfiniBand networking, ensuring data moves between servers as fast as it moves within the GPU.

-

Software Stack: We utilize NVIDIA AI Enterprise, a suite of tools that ensures the hardware is secure, supported, and running optimized containers.

8. Conclusion: The Future is Blackwell and Beyond

We are currently entering the Blackwell era. With the B200, NVIDIA is pushing the boundaries of “Generative AI” efficiency, offering 30x the performance of the previous generation for certain LLM workloads.

For any organization looking to remain relevant in the next decade, GPU compute is no longer an “option”—it is the fundamental infrastructure. Whether you are building a private cloud, optimizing a supply chain, or developing the next great AI application, NVIDIA GPUs are the gold standard of the industry.